Supported Providers

- AWS Bedrock

- OpenAI

- Anthropic

- Azure OpenAI

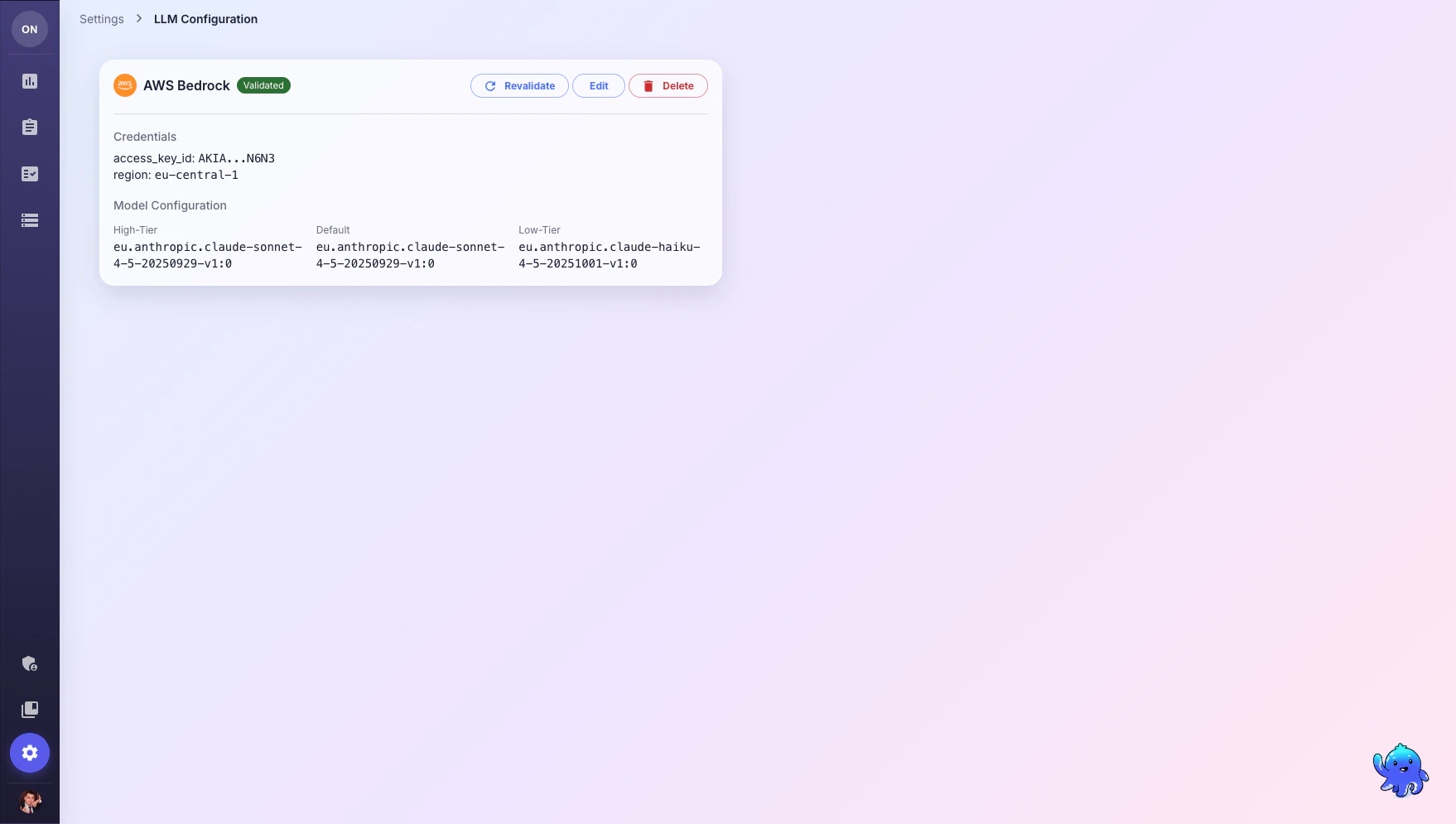

Required fields:

- Access Key ID

- Secret Access Key

- Region (e.g.,

us-east-1,eu-central-1)

- High-Tier:

anthropic.claude-sonnet-4-5-20250929-v1:0 - Default:

anthropic.claude-sonnet-4-5-20250929-v1:0 - Low-Tier:

anthropic.claude-haiku-4-5-20251001-v1:0

Model Tiers

OneTest uses three model tiers for different task complexities:| Tier | Purpose | Examples |

|---|---|---|

| High-Tier (reasoning) | Complex analysis, test generation from requirements | Generating comprehensive test suites, analyzing coverage gaps |

| Default (balanced) | General-purpose tasks | Answering questions, searching tests, simple generation |

| Low-Tier (fast) | Quick, simple operations | Summarization, classification, simple lookups |

Setup Steps

Configure Model Tiers

Enter model identifiers for each tier. Use the recommended models above or your own deployments.

What’s Next?

AI Assistant

Start using the AI Assistant to generate and search tests

Integrations and API Keys

Connect CI/CD pipelines and external tools via API keys